The Memo - 14/Nov/2025

ERNIE 5.0 2.4T, Xpeng IRON update, OpenAI 1M companies, and much more!

To: US Govt, major govts, Microsoft, Apple, NVIDIA, Alphabet, Amazon, Meta, Tesla, Citi, Tencent, IBM, & 10,000+ more recipients…

From: Dr Alan D. Thompson <LifeArchitect.ai>

Sent: 14/Nov/2025

Subject: The Memo - AI that matters, as it happens, in plain English

AGI: 95%

ASI: 0/50 (no expected movement until post-AGI)There’s a soundtrack to this edition. ‘Walk my walk’ by Breaking Rust hit #1 on the Billboard Country Digital Song Sales chart, and is AI-generated (NPR, 10/Nov/2025).

Listen: https://youtu.be/g_K0tOwUJ1k

Contents

The BIG Stuff (Xpeng IRON update, OpenAI 1M companies, pre-ASI findings…)

The Interesting Stuff (ERNIE 5.0 2.4T, TPUs GA, GPT-5.1, Tesla 100GW, Airbnb…)

Policy (China v US, OpenAI, copyright, Ilya Sutskever speaks, Greg Brockman…)

Toys to Play With (New Udio replacement, screen watcher, image gen comparison…)

Things I’ve Been Thinking About (Post-ASI + paper on entertainment/meaning…)

Next (Roundtable…)

The BIG Stuff

Xpeng IRON update (5/Nov/2025)

Xpeng’s AI Day 2025, themed ‘Emergence’, showcased a transformative leap in physical AI with the unveiling of the next-gen IRON (plus the Xpeng VLA 2.0 model, Robotaxi, and innovative flying systems). He Xiaopeng, Chairman and CEO, declared Xpeng’s new identity as a ‘global embodied intelligence company’.

The XPENG Next-Gen IRON is equipped with 3 Turing AI chips, with an effective computing power of 3000 TOPS, and at the same time, it is the first to be equipped with XPENG’s first-generation physical world large model. By constructing a high-order combination of capabilities of the “VLT + VLA + VLM”, it realizes the three high-order intelligences of “conversation, walking, and interaction”.

…“VLT large model” is a brand-new large model specifically developed for robots , regarded as the core engine for robots’ autonomous actions, enabling them to achieve in-depth thinking and autonomous decision-making.

Read more via Xpeng.

Here’s a two-minute clip of the humanoid robot walking on stage (link):

Sidenote: The Memo editor, Jess, asks ‘Why did they make the Xpeng robot sexy? 🫠‘

Watch an alternative video by EuroNews.

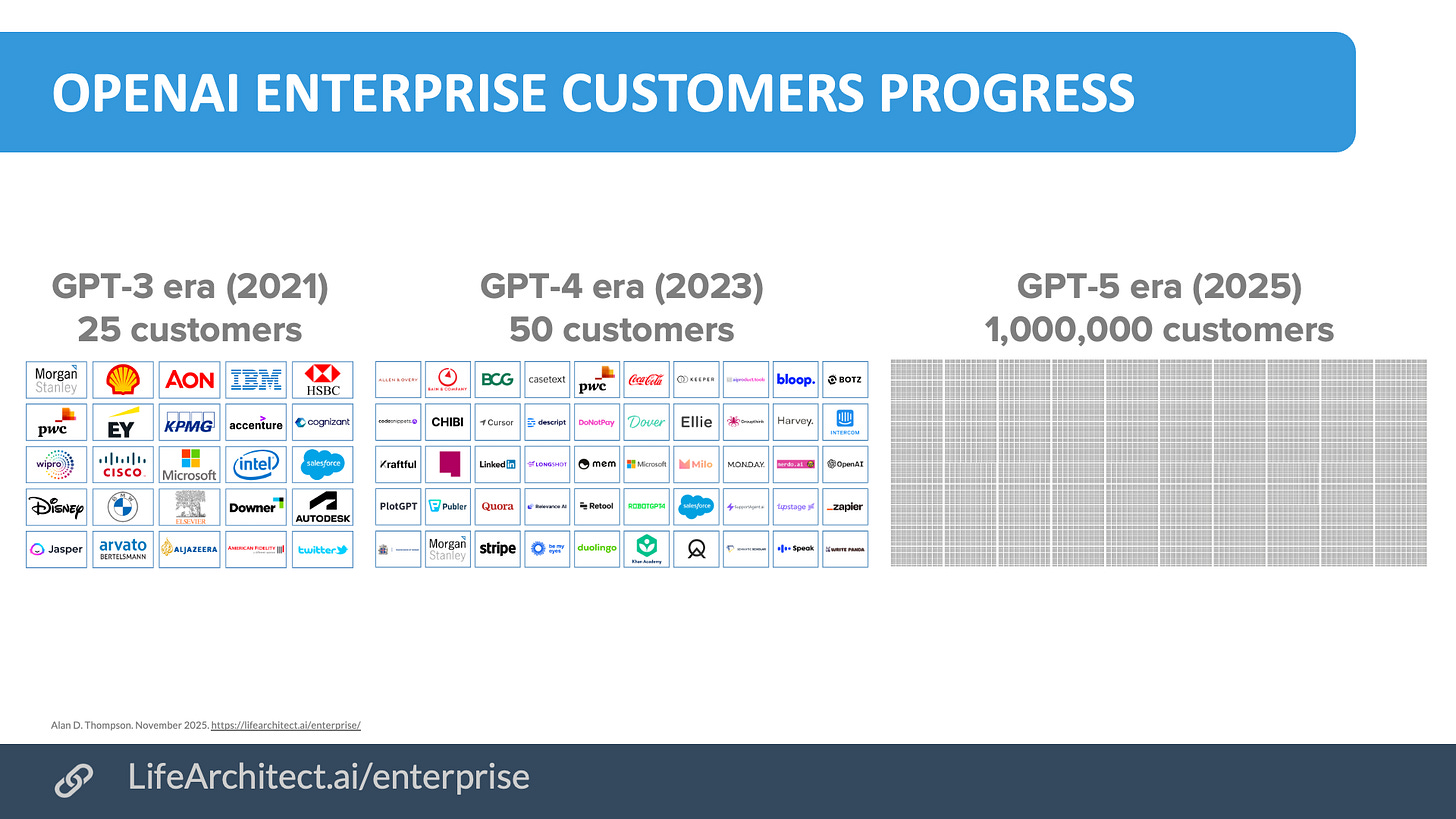

OpenAI secures 1 million businesses (5/Nov/2025)

OpenAI has surpassed 1 million business customers, making it the fastest-growing business platform ever. Companies include Amgen, Commonwealth Bank (hence the opening of the new Sydney office here in Australia!), LG, Singapore Airlines, eBay, T-Mobile, Booking.com, Lowe’s, Target, and Cisco. With over 800 million weekly users familiar with ChatGPT, the adoption rate within businesses has skyrocketed, facilitating rapid pilot completions and reducing rollout friction.

Read more via OpenAI.

I’ve been documenting this since 2020, easily seen in the combined viz above, but here’s a zoom of all 1,000,000 dots, each representing a different business logo:

Exclusive: More human experts are coming to terms with pre-ASI model capabilities (Nov/2025)

Professor Mark Humphries was shocked by his recent experience of a new Gemini model, probably Gemini 3, being applied to the field of transcribing handwritten historical documents. What Gemini did was complex (and worth reading), but the gist is that while transcribing a 1758 merchant’s ledger, Gemini encountered the ambiguous text ‘To 1 loff Sugar 145’. The AI correctly inferred the text meant ‘14 lb 5 oz’ by working backwards from the total cost (0/19/1) and unit price (1/4) to calculate the weight (229 pence / 16 pence = 14.3125 lb). The author calls this ‘genuine, human-like, expert level reasoning’.

…no one asked Gemini to do this. It took the initiative to investigate and clarify the meaning of the ambiguous number all on its own. And it was correct.

…there is something in this new machine that is markedly different. From my admittedly limited tests, the new Gemini model handles this type of data much better than any previous model or student I’ve encountered…

…we need to extrapolate from this small example to think more broadly: if this holds [then] the models are about to make similar leaps in any field where visual precision and skilled reasoning must work together [as] required. As is so often the case with AI, that is exciting and frightening all at once. Even a few months ago, I thought this level of capability was still years away.

What this new Gemini model seems to show is that near-perfect handwriting recognition is better achieved through the generalist approach of LLMs. Moreover, the model’s ability to make a correct, contextually grounded inference that requires several layers of symbolic reasoning suggests that something new may be happening inside these systems—an emergent form of abstract reasoning that arises not from explicit programming but from scale and complexity itself…

...[this new Gemini model] is demonstrating an understanding of the economic and cultural systems in which those records were produced…and then using that knowledge to re-interpret the past in intelligible ways.

Read it: https://generativehistory.substack.com/p/has-google-quietly-solved-two-of

Although the scale of these models has increased dramatically, the technology behind them has not changed substantially since 2020, with GPT-3. Beginning with that model’s capabilities, it was clear what was happening, and I issued a raft of warnings internationally:

19/Sep/2021: ‘AI is outperforming humans in both IQ and creativity in 2021’ https://lifearchitect.ai/outperforming-humans/

20/Jul/2021: ‘AI fire alarm’ https://lifearchitect.ai/fire-alarm/

Jan/2022: ‘AI report submission to UN’ https://lifearchitect.ai/un/

25/Oct/2023: ‘Leaders guilty of negligence’ https://lifearchitect.ai/leaders-guilty-of-negligence/

2021–present: The Memo editions including the one that you’re reading right now!

What’s particularly fascinating is how hidden some of these experts’ discoveries are. We have professors and subject matter experts and peak performers in niche fields around the world privately testing frontier models against their specialty. (I picture them sitting in their lab with the same excited or shocked or horrified expression on their face that I had four years ago with the Leta experiments.)

Each time, they’re finding that the model outperforms human experts, with private or public statements like Mark’s: ‘What this new [frontier] model seems to show is that [my field’s specialist data and analyses] is better achieved through the generalist approach of LLMs.’

We saw it in a big way last year (Jun/2024) when Adam Unikowsky, who was a former law clerk to Justice Antonin Scalia, and won eight Supreme Court cases as lead counsel, said:

Claude is fully capable of acting as a Supreme Court Justice right now... I frequently was more persuaded by Claude’s analysis than the Supreme Court’s… Claude works at least 5,000 times faster than humans do, while producing work of similar or better quality…

We’re going to keep seeing this happen.

And they’ll keep acting surprised.

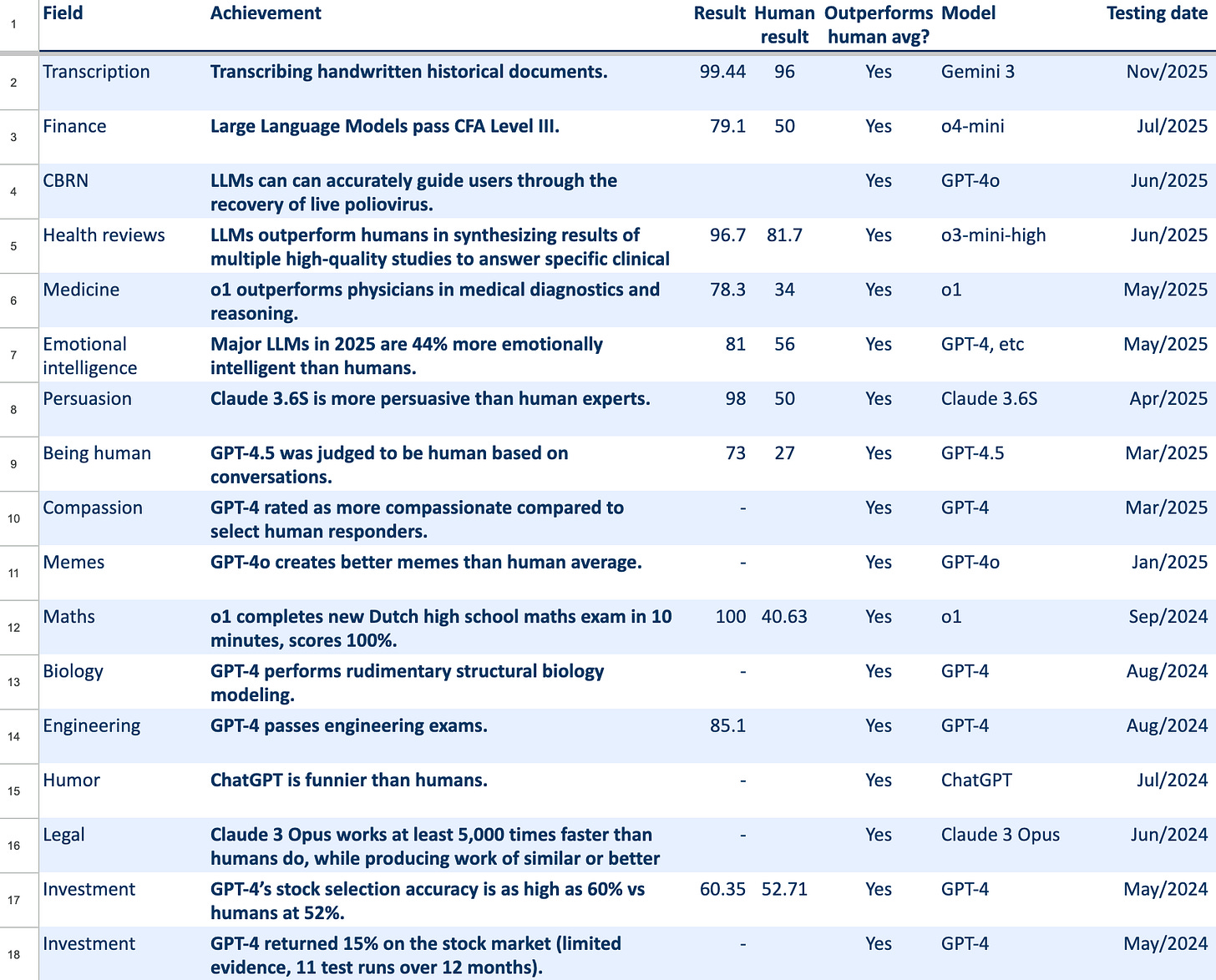

I continue to track superintelligence indicators at: https://lifearchitect.ai/asi/

On that page, I’ve been tracking major pre-ASI capabilities for a while, screenshot below: https://lifearchitect.ai/asi/#capabilities

The Interesting Stuff

Google Ironwood TPUs: General availability (6/Nov/2025)

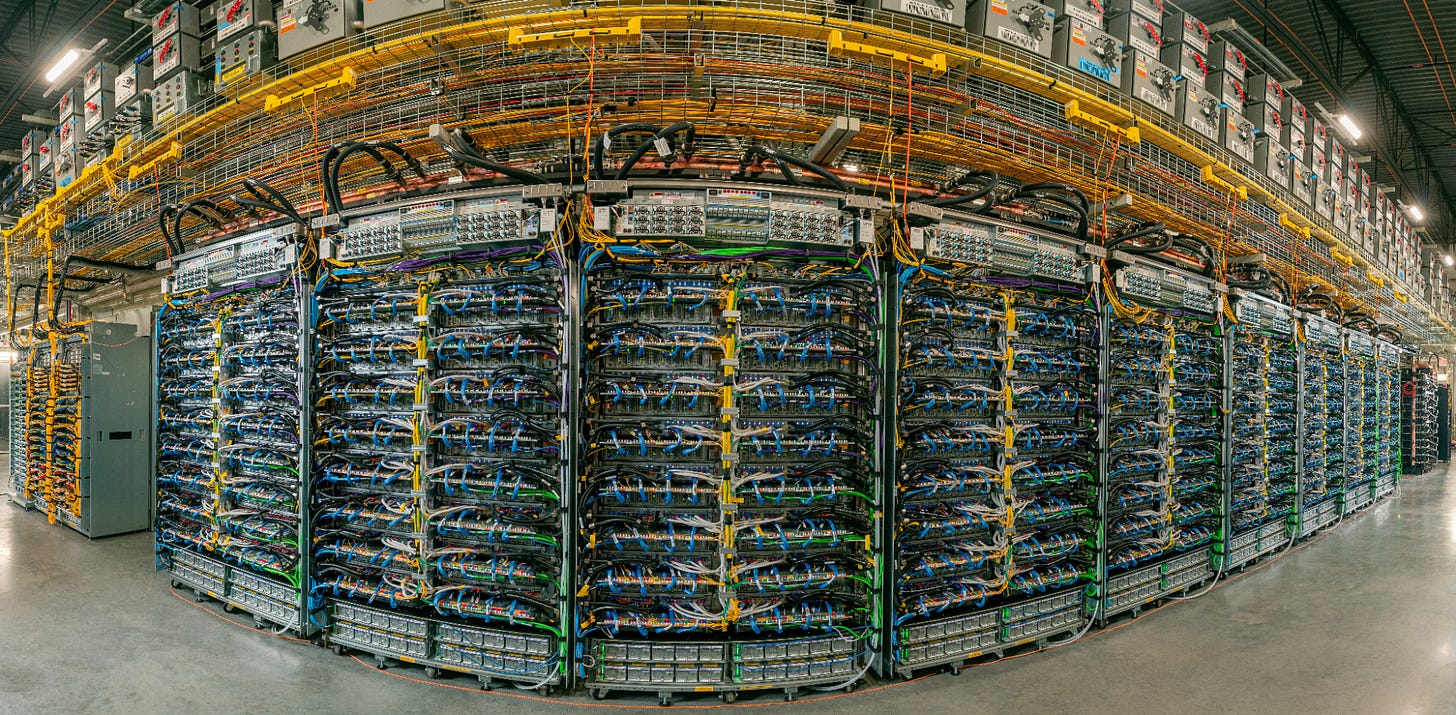

Near the beginning of my keynotes, I still feature video of a Microsoft datacentre to show the birthplace and home of GPT. Google’s latest photo looks far more impressive though: racks with over 9,000 TPUs serving Gemini, Claude, and more.

TPUs have been Google’s trade secret and strategic advantage since 2015. A decade later, Google Cloud has announced the ‘general availability’ of the latest Ironwood TPUs, marking a significant advancement in AI infrastructure.

Today’s frontier models, including Google’s Gemini, Veo, Imagen, and Anthropic’s Claude train and serve on Tensor Processing Units (TPUs)…

10× peak performance improvement over TPU v5p and more than 4× better performance per chip for both training and inference workloads compared to TPU v6e (Trillium)…

9,216 chips in a superpod linked with breakthrough Inter-Chip Interconnect (ICI) networking at 9.6Tb/s. This massive connectivity allows thousands of chips to quickly communicate with each other and access a staggering 1.77 Petabytes of shared High Bandwidth Memory (HBM)…

Read more via Google Cloud Blog.

The Memo features in recent AI papers by Microsoft and Apple, has been discussed on Joe Rogan’s podcast, and a trusted source says it is used by top brass at the White House. Across over 100 editions, The Memo continues to be the #1 AI advisory, informing 10,000+ full subscribers including RAND, Google, and Meta AI. Full subscribers have complete access to all 25+ AI analysis items in this edition!

Baidu ERNIE 5.0 (13/Nov/2025)

We’ve been waiting for this model for a while.

Total parameter count is 2.4T MoE; and I’ve estimated the dataset to hit 100T tokens trained.

The benchmarks were obfuscated (13/Nov/2025), and my testing showed unimpressive results using the standard ALPrompt.