To: US Govt, major govts, Microsoft, Apple, NVIDIA, Alphabet, Amazon, Meta, Tesla, Citi, Tencent, IBM, & 10,000+ more recipients…

From: Dr Alan D. Thompson <LifeArchitect.ai>

Sent: 17/Sep/2024

Subject: The Memo - AI that matters, as it happens, in plain English

AGI: 81%Robin Sloan, author (Mar/2024)

’I’m trying hard to imagine an average day [post ASI].

I can’t even come up with a half-decent scene.

Will people live in buildings? Will they wear clothes?

My imagination is almost physically straining.

It’s easier to write the defeat than the victory, isn’t it?

Easier to write the failure than the success.

For some reason, the success seems like it might be … boring.

…Turns out, no, it’s not boring at all. Plot gallops on, even at the outer limits of matter and energy. Even at the far reaches of freedom, the stories are only just beginning.’

Contents

The BIG Stuff (o1 analysis, humanoid robots value, Last Exam, OpenAI profit…)

The Interesting Stuff (Neuralink update from Noland, AlphaProteo, Tao, Oracle…)

Policy (AI in judgment, TSMC US, China H100s, datacenter=critical infrastructure…)

Toys to Play With (AI quiz, clone webpage via Claude, NotebookLM + demos…)

Flashback (Google DeepMind Gemini…)

Next (Roundtable…)

The Memo continues to be a primary source of AI analysis for all humanity, including major government and enterprise. The Memo features in foundational papers across organizations like Accenture, Brookings, NYU, Weights & Biases, and more (all paper links at the end of this edition).

Apple referenced The Memo for its new model paper and visualizations this month, leaning on analysis of model sizes and parameter counts provided in this publication.

Thanks to ‘LiliTheAdventurer’ for this AI-generated track via the new Suno v3.5, ‘Gone’. It’s challenging for me to remember that this has nothing to do with 1980s MIDI control signals, but actually a complete studio-performance-style song conceptualized from scratch. With vocals in Japanese…

The BIG Stuff

Exclusive: o1: Smarter than we think (17/Sep/2024)

Building on the special edition from last week (12/Sep/2024), I’ve released a new page highlighting the major advancements of OpenAI o1. The comprehensive analysis includes size estimates for the o1 model.

Ready for new discoveries and new inventions

Reasoning via reinforcement learning and increased test-time compute

Hidden CoT (chain of thought)

Increased self-awareness

The most dangerous model ever released (so far...)

Pricing

Observations

Conclusions

Solar system model (HTML animation)

Coding competitions (Codeforces)

Mathematics (Prof Terry Tao)

Read more: https://lifearchitect.ai/o1

How ARK is thinking about humanoid robotics (10/Sep/2024)

As Elon Musk said during Tesla’s first-quarter earnings call, “If you've got a sentient humanoid robot that is able to navigate reality and do tasks at request, there is no meaningful limit to the size of the economy.” Musk was sharing a vision that we believe is turbocharging the robotics industry, creating new robotics companies and increasing venture investments focused on its promise.

…if humanoid robots are able to operate at scale, they could generate ~US$24 trillion in revenues, split roughly equally between household and manufacturing robotics, according to ARK’s research…

At a cost of US$16,000, for example, a humanoid robot would have to deliver little more than a 5% gain in productivity relative to its human counterpart to become economically viable.

Read more via ARK Invest.

It’s worth remembering that robots can work 24×7, probably without air conditioning, and in the dark…

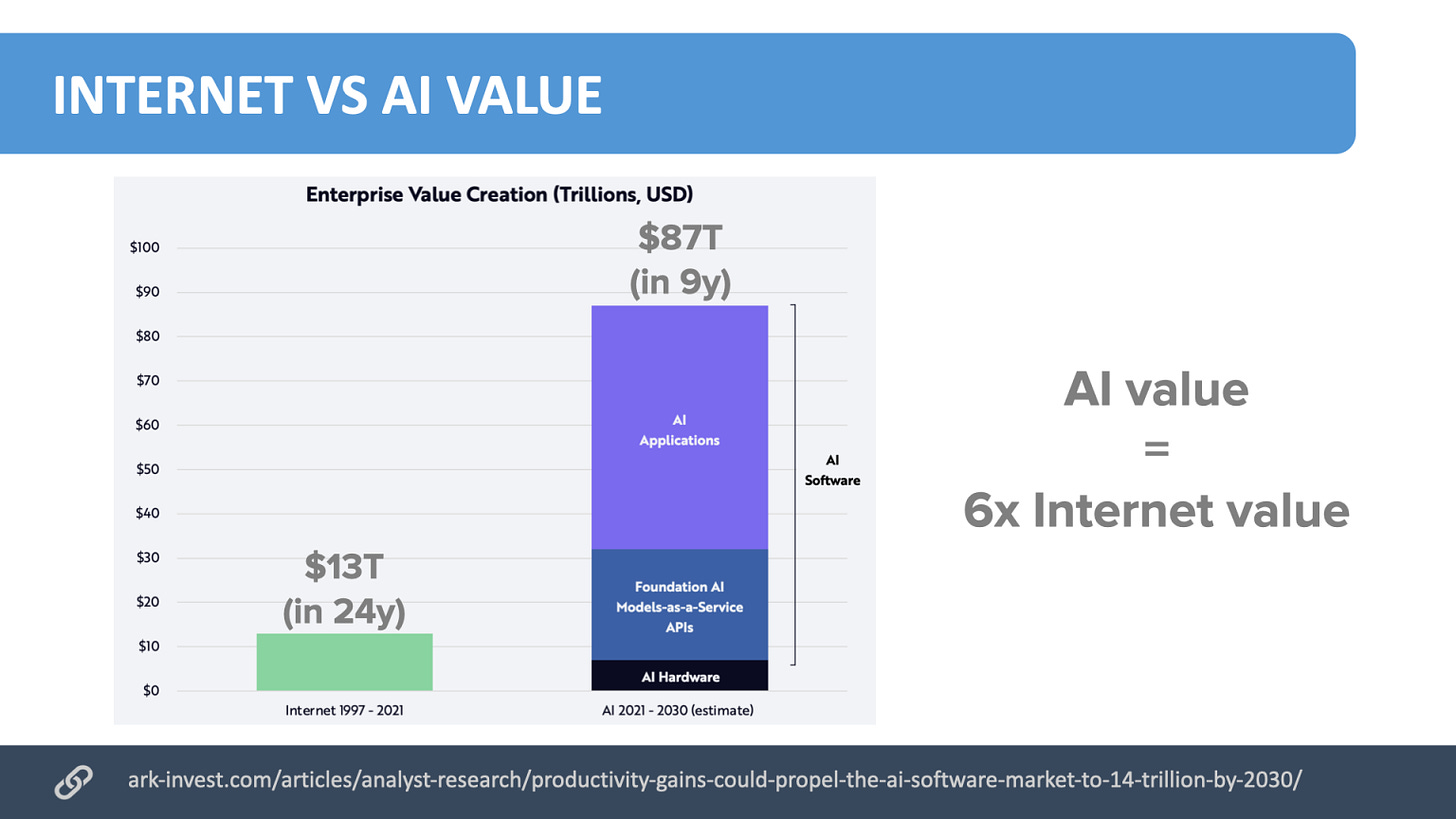

I still use ARK’s Jun/2022 AI figures in my keynotes:

1X NEO: I Lived With a Humanoid Robot for 48 Hours by S3 (8/Sep/2024)

In last week's S3 1X shared their plans to quickly deploy Neo, their new robot, in homes. So I asked if they'd put Neo in my home.

This is worth watching in full, and added an ‘info’ note to my AGI countdown due to how close it comes to fulfilling Woz’s AGI definition (strange house, make a cup of coffee).

Watch the video: https://youtu.be/Sb6LMPXRdVc

Reuters: AI experts ready ‘Humanity’s Last Exam’ to stump powerful tech (17/Sep/2024)

Scale AI has launched a global initiative called ‘Humanity’s Last Exam’ to challenge AI systems with difficult questions that extend beyond current benchmark tests.

"[o1] destroyed the most popular reasoning benchmarks… They're now crushed."

— Dr Dan Hendrycks, creator of MMLU and MATH (Reuters, 17/Sep/2024)

This project, organized by the Center for AI Safety and Scale AI, aims to evaluate AI's true capabilities, particularly in abstract reasoning, by crowdsourcing over 1,000 challenging questions.

Read more via Reuters, and source via Scale AI.

You can submit a question to win US$5,000. Scale AI suggests that items should only be designed by PhD experts(!) and:

Questions should not be simple trick questions. Questions should not be straightforward calculation/computation questions. The questions for this dataset can be so challenging that they are impractical for real-world human exams or problem sets. The answers should not be easily found through a Google search.

It should impress you if an AI correctly answers your question. We found questions written by undergraduates tend to be too easy for the models. As a rule of thumb, if a randomly selected undergraduate can understand what is being asked, it is likely too easy for the frontier LLMs of today and tomorrow.

Submit an item: https://agi.safe.ai/submit

This is another comprehensive edition of The Memo, coming in at nearly 5,000 words. And that’s after filtering out ~95% of the AI ‘news’ that comes across my desk. Let’s jump in…